(Updated 19 July 2017 regarding Zotero 5.)

Inspired by an eMail exchange with a colleague I thought I would write a longer post on how to use the open source reference manager Zotero. Obviously all the information will in some form be available in its documentation, but at least to me the things I really need to look up are often the needle in the haystack of the obvious and the irrelevant. So here is what I think is what one needs to know to start using Zotero in what is hopefully a logical order.

All of this is based on Zotero 4, as I have not yet used the newest, version 5. From version 5 the standalone program is required instead of Zotero running through the browser alone. See the relevant comment under installation below.

How it works

Zotero is available for Win, Mac and Linux and for LibreOffice or MS Word. It integrates into the browser (I use Firefox, but it also seems to work with Chrome, Safari and Opera), and the usual way to use it is through the browser. This means that the browser has to be open at the same time as the word processor, but there is also a standalone version that I am not familiar with.

Installation

To be honest, this is the only point on which I am a bit confused at the moment because it has been a bit since I installed one of my instances. There are three items that may have to be installed: Zotero itself on the

download page, for which there are specific

instructions. Then there is the "connector" for the browser you have. Finally, it may be necessary to install a word processor plug-in for your browser. What confuses me is that I seem to remember only installing the latter two last time, so either I misremember or something has changed with the newest Zotero version (?).

Either way, while the installation of Zotero into the browser itself is easy, I have noticed that sometimes the word processor plug-in does not take on the first attempt. In that case I merely repeated the installation and restarted everything, and then it worked.

Update: The colleague who has now started using Zotero has, of course, installed the newest version and kindly adds the following:

The new version of Zotero needs the standalone program installed. This is because Mozilla has dropped the engine that supported a lot of extensions (like Zotero). It was deemed that allowing the browser to carry out low-level functions on the host computer introduced inherent vulnerabilities, and so Firefox versions after 48 have very much restricted what the browser is allowed to do (no longer can it communicate directly with databases, and carry out a lot of file handling functions). The problem is not restricted to Zotero, for example Gnome desktop extensions used this functionality and have also had to change the way they do things. The long and the short is that nowadays you need the standalone application installed.

Using Zotero in the browser and building your reference library

When you have Zotero installed there are two new buttons in the browser: a "Z" that opens your reference library and a symbol right next to it that you can click to import a journal article into that library. Given that the library will at first be empty let's look at that latter function first.

|

| The Zotero buttons in the browser |

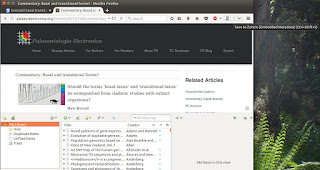

For example, I may have found a journal article through a Google Scholar search. Ideally, I am now looking at the abstract or HTML fulltext on the journal website, because that page will have all the metadata I want. I now click on the paper symbol to the right of the Z, and Zotero automatically grabs all the fields it can find and saves a new entry into my reference library; if it can get a PDF it will even download that.

|

| Viewing paper abstract |

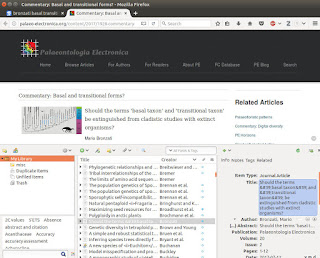

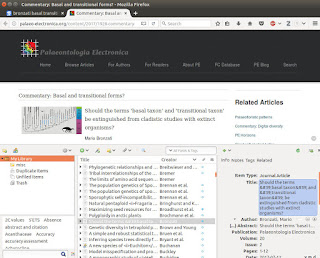

Now I click on the Z button to bring up my library, if I didn't have it open yet. In some cases I may notice that something went wrong. The typical scenarios are that the title of the paper is in Title Case or ALL CAPS. This is easily rectified by right-clicking on the title field and selecting "sentence case".

|

| A reference has been added to the library |

If something needs to be edited manually we can do so by left-clicking onto the relevant field. For paper titles, the usual problems would be having to re-capitalise names after correcting for Title Case or adding the HTML tags for italics round organism names. In the present case, however, I find that the title contains HTML codes for single quotation marks instead of the actual quotation mark characters, so I quickly correct that. Manual entry is, of course, also possible for an entire reference, for example if it isn't available online. In that case simply click on the plus in the green circle and select the appropriate publication type.

So much for importing references. It is also possible to bulk-import from the Google Scholar search results, but I would not recommend that as Google sometimes mixes up the metadata.

The style repository

The first time we try to add a reference to a manuscript, we are asked what reference style should be used. Zotero comes with only a few standard styles installed, but many more

are available at the

Zotero style repository. One of the in my eyes few downsides of Zotero is that it has less styles than Endnote, but often it is possible to get the relevant one under a different name. If, for example, you are preparing a manuscript for PhytoTaxa the ZooTaxa style should serve just as well.

Installing a new style is as easy as finding it in the style repository, clicking on its name, and confirming that it should be installed.

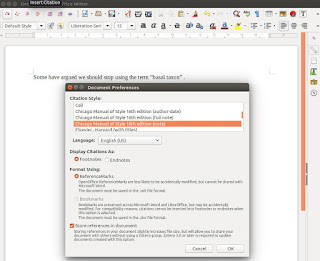

|

| Selecting a reference style |

Using Zotero in the word processor

Again, note that the browser needs to be running while we are adding references to a paper. The following assumes LibreOffice, but except for where to find the buttons everything is the same in MS Word.

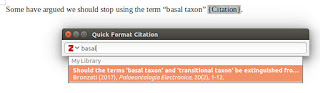

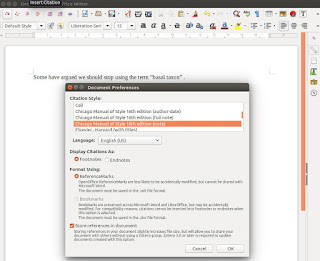

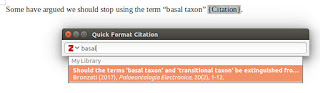

In LibreOffice you will have new buttons for inserting and editing references, for inserting the reference list, and for changing document settings, in particular the reference style. To insert a reference, click on the button that seems to read r."Z. You can now enter an author name or even just a word from the title, as in my example here, and Zotero will suggest anything that fits.

|

| Adding a reference to a manuscript |

Another downside of Zotero, at least as of version 4 which I am still using, is that it doesn't do a reference like "Bronzati (2017)". Instead you can either have "(Bronzati 2017)" or reduce the reference to "(2017)". For this click on the reference in the field where you were asked to select it (if you have already entered it simply use the edit reference button showing r." and pencil) and select "suppress author". Then you have to type the author name(s) yourself outside of the brackets, which is obviously a bit annoying.

|

| Author names outside of brackets have to be added manually |

Once we have added a few references, we obviously need to add the reference list. This is as easy as clicking the third button in the Zotero field. The only others that are usually important are the two arrows (refresh) to update the reference list (although it does so automatically when the document is reloaded) and the cogwheel (document preferences) that allows changing the reference style across the document.

In LibreOffice I have sometimes found that adding or updating references changes the format of the entire paragraph they are embedded in. This seems to happen if the default text style is at variance with the text format actually used in the manuscript. Selecting a piece of manuscript text and setting the default style to fit its format has always rectified the situation for me.

Syncing

It is useful to get an account at the Zotero website and use it to sync one's reference library across computers. Again, this works cross-platform. I do it between a Windows computer at work and my personal Linux computer at home. Note, however, that it only syncs the metadata, not any fulltext PDFs that have been saved.

To sync, go into the browser and click on the Z symbol to open Zotero. Now click the cogwheel and select preferences. The preferences window has a sync tab where you can enter your username and password. Do the same on two computers and they should share their reference libraries.